Gia

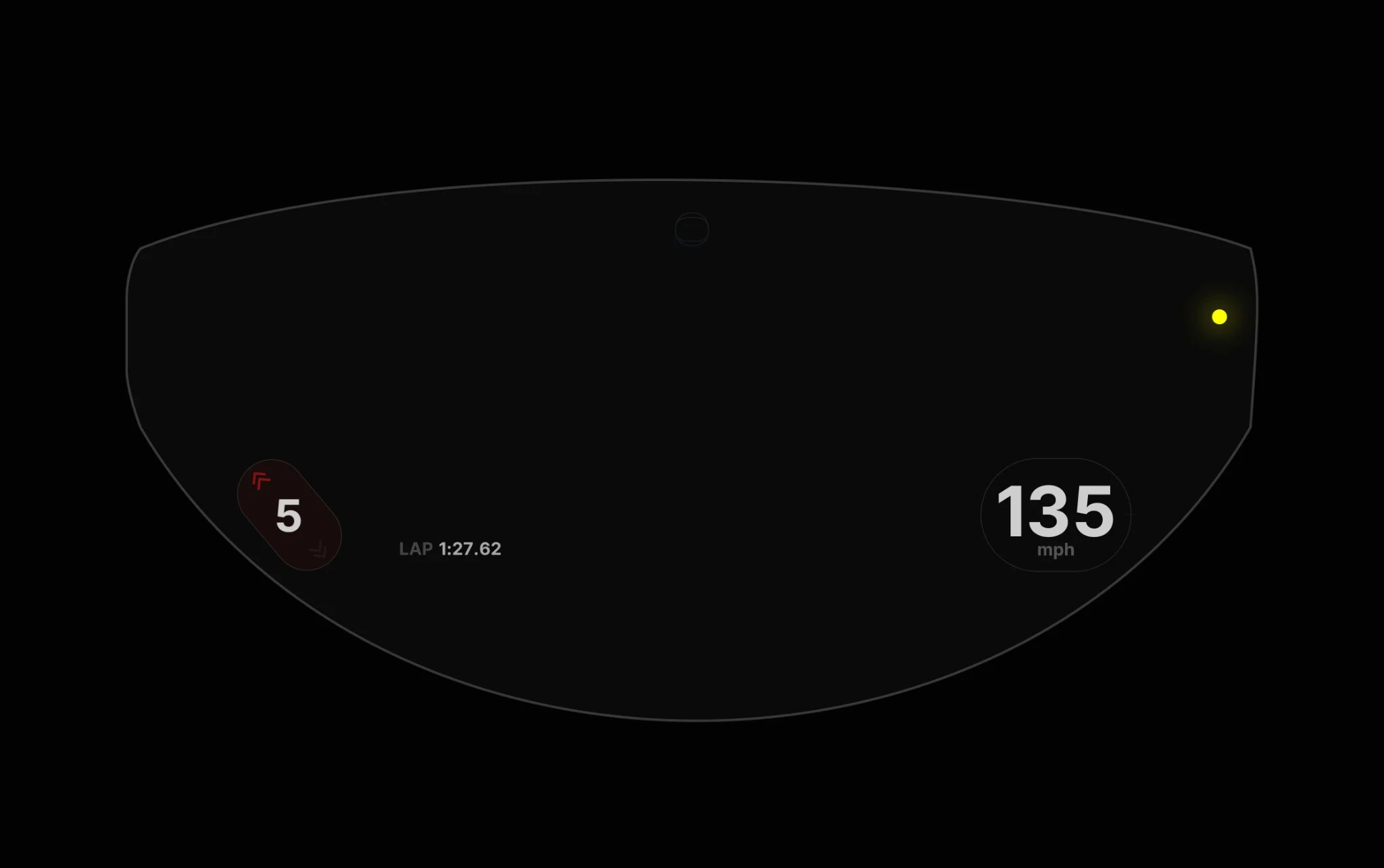

Designing a human–AI safety system for attention-critical environments.

Context

Motorcycling is a high cognitive load, safety critical activity. Riders continuously manage navigation, road conditions, speed, timing, and environmental hazards. Attention is fully occupied.

Most technology designed for riders follows one of two flawed models. Either it increases visual load through dashboards and alerts, or it intervenes reactively in ways that interrupt rather than support.

After experiencing a serious motorcycle accident, I began questioning the assumptions behind assistive technology. What would an AI system look like if it treated attention as a finite resource?

Gia began as an exploration of that question.

The Problem Space

This was not an interface problem. It was a human AI coordination problem under strict constraints:

- Visual attention is already saturated

- Reaction time is critical

- Interruptions increase risk

- Riders cannot manage complex systems mid ride

The core challenge became clear:

How do you design an AI system that informs without distracting and assists without taking control?

In safety critical contexts, less information is often more useful than more intelligence.

Design Principles

Gia was defined as a safety system from the start.

- Success was measured by reduced cognitive load, not feature breadth.

- Interaction that pulled attention from the road was considered failure.

- Voice and ambient feedback were prioritized over visual interfaces to keep eyes and hands free.

- AI was framed as an adaptive partner that supports rider judgment rather than overrides it.

- The system learns gradually over time instead of demanding configuration upfront.

Restraint became the primary design principle.

System Concept

Gia functions as an AI riding companion that:

- Observes riding patterns and environmental signals

- Anticipates moments where guidance improves safety

- Communicates selectively through voice or ambient cues

- Learns rider thresholds and preferences over time

The system does not surface everything it knows. It intervenes only when clarity or safety meaningfully improves.

Guidance is contextual, brief, and timed to moments of availability.

The emphasis is not on constant assistance. It is on calibrated intervention.

Design and Prototyping

My work included:

- Research into rider cognition and situational awareness

- Mapping moments of overload versus cognitive availability

- Prototyping voice interactions under real riding constraints

- Exploring how adaptive learning remains transparent and trustworthy

- Stress testing concepts against real world riding scenarios

I deliberately rejected interactions that were visually impressive but operationally unsafe.

Design decisions were filtered through a single question:

Does this reduce cognitive burden in motion?

Voice as Primary Interface

Voice was designed as the primary communication channel.

When eyes and hands are fully committed, spoken cues preserve situational awareness. Feedback is short and contextual. Turn reminders, hazard alerts, and route updates are delivered only when they meaningfully improve safety.

The goal was not conversational AI. It was precise intervention.

Outcomes and Recognition

Gia received the AI Design Award in 2025 for its human centered approach to AI in mobility contexts.

The project demonstrated a viable design direction for AI systems operating under strict attentional constraints.

More importantly, it clarified a broader thesis:

AI value in safety critical environments emerges through restraint, not visibility.

Gia continues to inform my thinking about humane AI, adaptive systems, and minimal intervention design.

Learnings

- Attention is the primary design constraint in safety critical systems.

- AI effectiveness increases when systems know when not to act.

- Trust grows when assistance supports judgment rather than replaces it.

- Designing for safety often requires designing against feature accumulation.

Reflection

Gia reinforced a principle that shapes my work:

Human AI systems should adapt to people, not ask people to adapt to them.

The hardest problems were not interface decisions. They were behavioral and ethical ones. Defining when to speak, when to remain silent, and how to build adaptive trust required reasoning about agency, responsibility, and risk.

This project strengthened my ability to design AI systems that operate in complex, real world environments where attention, safety, and judgment are inseparable.